Last week I was doing a write-up on how to replace MAC-Based Forwarding (MBF) with Policy Based Routing (PBR). This week I want to give you some background on why this should be your general Best Practice on the example of Hyper-V.

I was facing a strange NetScaler troubleshooting engagement the other day. Services were randomly flapping and the overall connectivity to the applications published through NetScaler was bad.

Investigation

NetScaler was a separate physical box but the same problem should apply to any form-factor.

At first I was blaming the network. But further analysis showed it only seems to affect a small subset of services – those hosted on Hyper-V. So I started blaming Hyper-V but turned out it wasn’t that simple.

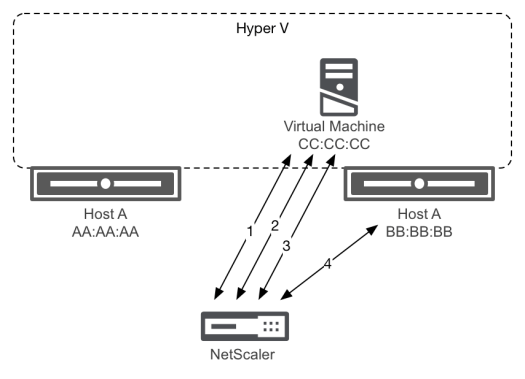

Drilling deeper into the issue I could see a significant high number of TCP re-transmissions on the mentioned sessions. It took me some time but then I’ve noticed the different MAC addresses involved in one session. One Intel and one Microsoft-tagged. Microsoft being the VMs MAC, Intel being the current host’s physical NIC.

Interesting enough most issues seemed to follow a pattern:

- Microsoft MAC speaks to NetScaler happily

- Intel MAC comes into picture

- Session struggles

Root cause

So it seems Hyper-V bounces MACs in certain scenarios. While this is not necessarily a problem on its own, it can become one. Meet MAC-Based Forwarding.

Ok, blaming MBF is only one part of the problem. The other resides in the fact that Hyper-V obviously seems to strictly expect responses on the virtual MAC while sending out traffic sourcing from both MACs.

Combining this with MBF will cause Hyper-V to drop packets the moment it decides to use the physical MAC and that’s exactly what happened in the above trace.

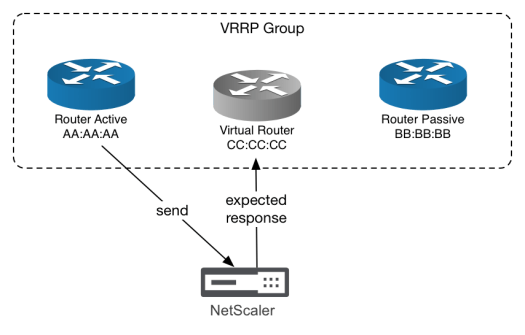

I’ve seen the same behavior with some routers using VRRP in the past. They did send out all traffic on the physical interface but expected all responses on the virtual interface.

However, this was only a small subset of routers I’ve encountered. Not all that use VRRP or other HA mechanisms. But still, this shows that it seems to be a sporadically reoccurring issue between NetScaler, MBF and some vendors.

Solutions

In the case of Hyper-V, the problem can be solved on both ends of the connection.

Another fellow blogger, Dale Scriven, pointed out in his article on the vHorizon blog that this can also be solved changing the Hyper-V NIC teaming load balancing settings from “Dynamic” to “Hyper-V Port”. Read the full story here: NetScaler VPX and Hyper-V flapping

Me personally I prefer to solve it at the NetScaler and prevent the “unexpected” responses to the sudden MAC change. This can be done by Disabling MAC-Based Forwarding (MBF) and Enabling Policy Based Routing (PBR).

Now I don’t know who’s right or wrong here. NetScaler or Hyper-V. I couldn’t find sufficient evidence in the RFCs I’ve skimmed to blame either of them. But I know that for the last years I’ve avoided MBF and my PBR concept never faced me with any of the nasty network-issue surprises that I occasionally face with MBF.

The fundamental issue with the NS’er is that if there are numerous NIC’s enabled (2-arm mode), all interfaces will be in the native VLAN by default. Having numerous interfaces in the same broadcast domain without LAGing them will induce MAC moves. This can easily be seen in the shell of the NS’er by leveraging the ‘nsconmsg’ command with the counter ‘bdg_mac’. Leverage the guidance in the following post to see if mac moves are occuring – https://www.citrix.com/blogs/2014/05/02/netscaler-counters-grab-bag/

Simple solution is to create access VLANs for each of the interfaces other than the DG interface so remove them from the native VLAN. Being that tagging is not in play, you’ll see no issues, but will see the mac moves cease. When too many mac moves occur, interface ‘mutes’ occur, which fundamentally means that zero packets are flowing from that interface to avoid the bridge loop.

PBR will not be needed IMO.

LikeLike

Yes, the VLANs is another point that gets misconfigured waaay too often!

The MAC flapping happened on Hyper-V, not on NS. So they can’t be solved on NS.

But MBF didn’t dealt with the flapping well, PBR did, hence that was my solution.

Beside of that, the need for PBR/MBF applies regardless of whether you have VLANs configured or not – as soon as you have multi-arm deployments.

NetScaler would always route to the default gateway or closest route if you don’t do PBR or MBF. Therefore it potentially creates asymmetric routing if you need to access two different interfaces from the same client subnet.

The VLANs aren’t taken into consideration in routing decisions as NS behaves like a router and will always choose the shortest path he knows.

LikeLike